Stop Mapping Workflows. Start Mapping Data.

Why AI keeps getting the wrong answer in HUB operations — and what to build instead.

3/9/20267 min read

Most pharma AI programs begin the same way.

A workshop. A whiteboard. A room full of process owners mapping every step of the patient journey — intake and enrollment, benefits investigation, prior authorization, specialty pharmacy triage, co-pay assistance, adherence follow-up. Six weeks and several consultants later, you have a beautiful swimlane diagram covering every handoff from prescriber to patient.

Then you hand it to the AI team.

This is where the problem starts. Not because the workflow doesn’t matter — but because the workflow is downstream of something nobody mapped: the data.

The Wrong Question

Most pharma AI programs are asking the wrong question from day one. Not the wrong vendor question or the wrong build-vs-buy question. The wrong starting question.

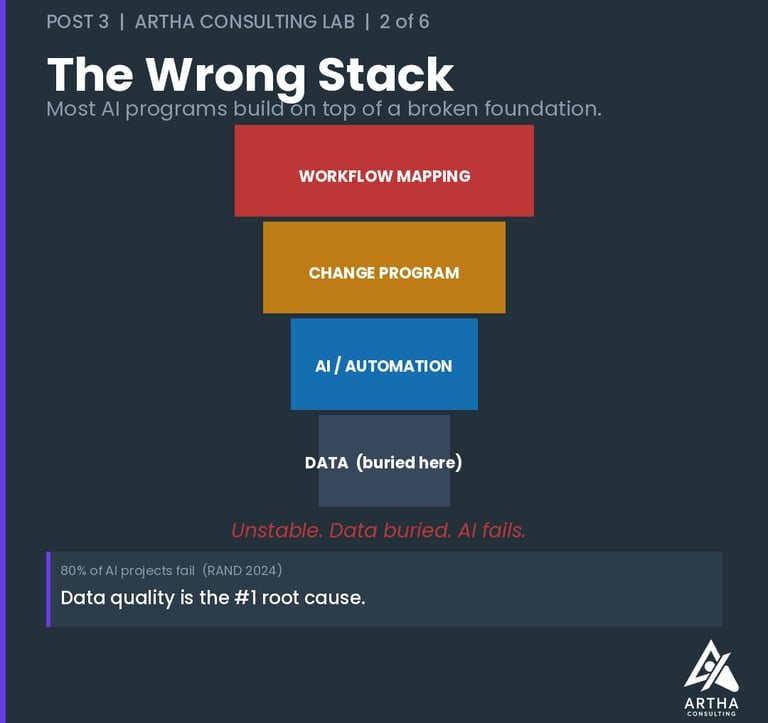

Everyone asks: Which workflows should we automate?

Almost nobody asks: Is our data in good enough shape for AI to trust?

The evidence on what that costs is consistent. A 2024 RAND Corporation study — based on interviews with 65 experienced data scientists and engineers — found that more than 80% of AI projects fail, twice the failure rate of conventional IT projects. The researchers identified data quality and organizational readiness as the primary root causes, not model capability or technology choice. In healthcare specifically, organizations typically spend 12–18 months and 60–70% of their total project budgets just on data integration work before AI can do anything useful. Gartner confirms the pattern from a different angle: only 48% of AI projects ever make it past the pilot phase. The technology isn’t the constraint. The data is.

In HUB operations, that second question exposes a structural problem that has existed for decades. Patient data sits fragmented across manufacturer CRM systems, HUB platforms, specialty pharmacy networks, payer portals, and EHRs — each speaking a different language, each updated on a different cycle. Benefits investigation data lives in one place. Prior authorization outcomes live in another. Case manager notes are free text. IVR call data is unstructured audio. And the outcome loop — what actually happened to the patient after triage, after the prior auth was approved or denied, after the co-pay program kicked in — is almost never closed.

You can build AI on this foundation. It will keep getting the wrong answer. Not because the model is bad, but because what goes in determines what comes out — and in most HUB environments, what goes in is fragmented, unstructured, and incomplete.

Why It Keeps Stalling

The evidence on this is consistent across independent sources. A 2025 IQVIA analysis found that only 11% of life sciences organizations have data that is comprehensively organized and accessible for AI applications. PwC puts the problem in starker terms: 61% of organizations say their data assets aren’t ready for AI — and they know it before they launch. Quest’s 2024 State of Data Intelligence Report found that 37% of organizations cite data quality as their single biggest obstacle to strategic AI use.

These aren’t implementation failures. They’re sequencing failures.

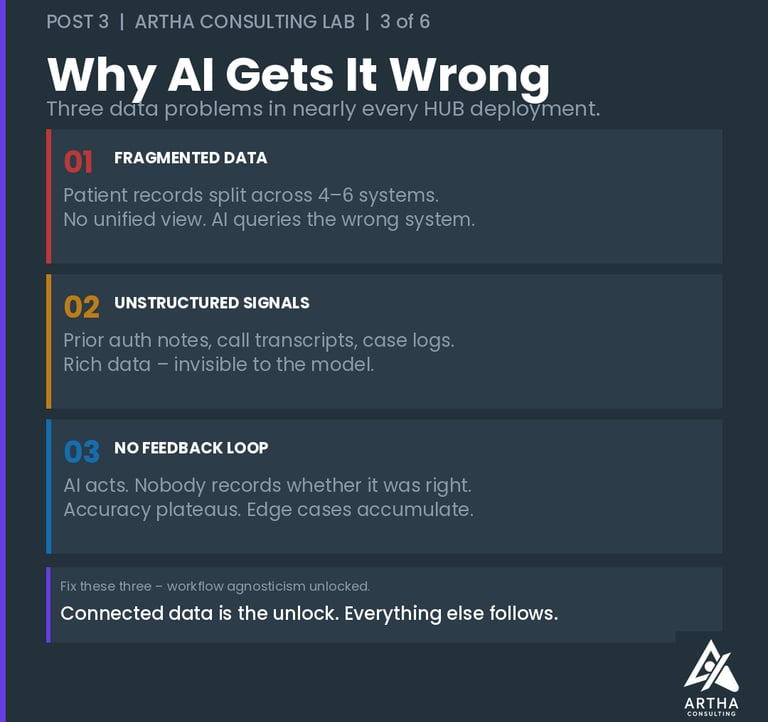

In HUB operations specifically, three data problems surface in nearly every deployment:

Fragmented patient data. A single patient case touches the manufacturer’s access team, the HUB’s case managers, the field reimbursement manager, the specialty pharmacy, and often a co-pay vendor. Each system holds a piece of the patient record. No single system holds the whole picture. AI that queries one system without the others makes decisions on an incomplete case — and in prior authorization or patient triage, an incomplete case is a dangerous one. Fragmented data isn’t just an operational inefficiency — it is the mechanism through which disconnected systems produce disconnected care. That’s a problem I’ll come back to in the next post.

Unstructured operational data. The richest signals in HUB operations — why a prior auth was denied, what a nurse case manager said on the follow-up call, what the prescriber flagged during the benefits investigation — live in free-text notes and call transcripts. They exist. They’re just invisible to a model that needs structure. Organizations that skip the work of converting these signals into structured, machine-readable format are leaving their most valuable data on the table.

No closed loop on outcomes. Most HUB AI deployments are one-directional — the model acts, but nobody records whether the action was right. The prior authorization was submitted; did it get approved? The patient was triaged to the specialty pharmacy; did they enroll and stay adherent? Without outcome data feeding back into the model, accuracy plateaus. The AI never learns from the edge cases that matter most in specialty pharma — the complex patient journeys, the multi-step appeals, the cases that fall out of the standard workflow.

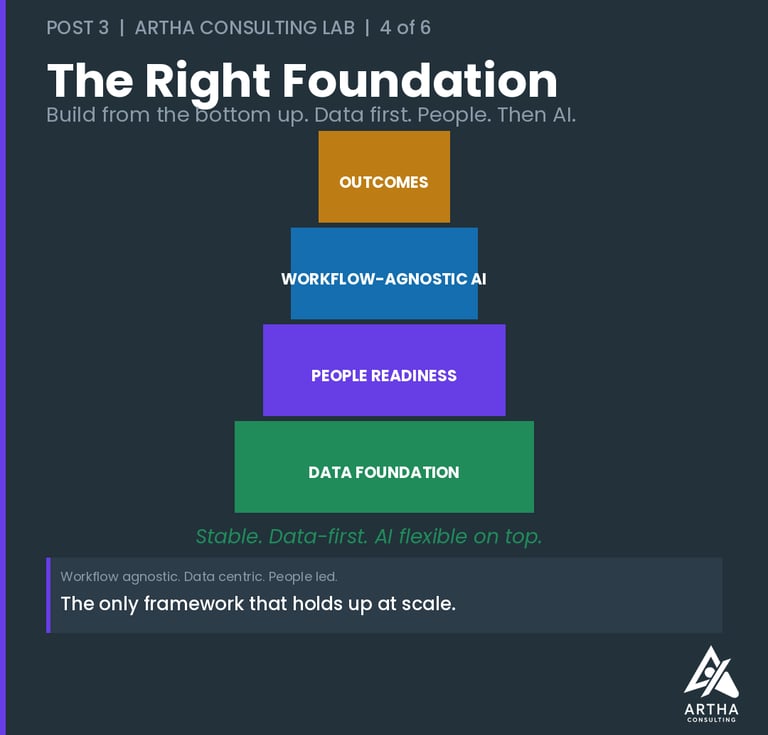

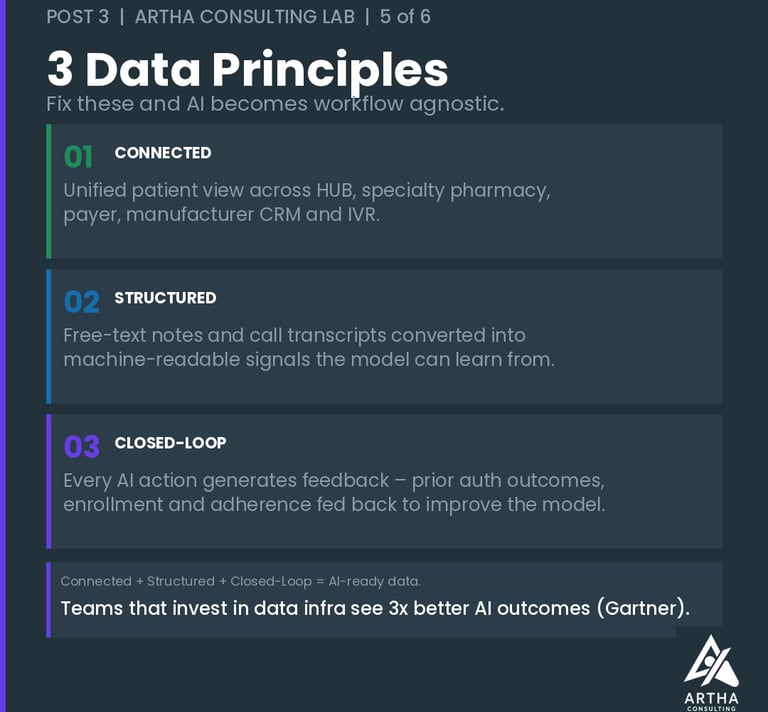

Fix these three things and something important becomes possible: workflow agnosticism. The AI stops caring which HUB platform you’re running, which specialty pharmacy network feeds the triage queue, which CRM your field reimbursement team uses. It works because the data layer underneath is solid — connected, structured, and self-improving.

The Second Problem Nobody Budgets For

Data quality is only half the explanation. The other half is people — and it’s the one I covered in depth in my previous blog. The pattern that separates organizations that scale AI from those that stall isn’t technology. It’s organizational readiness.

Deloitte’s 2025 survey of 1,854 executives found that pharma AI investments take 2–4 years on average to show satisfactory ROI — far beyond the 7–12 months expected from conventional technology investments. The gap isn’t because AI is slow. It’s because organizations arrive at go-live with clean-enough data and unprepared teams — and spend the next 18 months paying for it.

In HUB operations, this pattern is predictable. Case managers who don’t trust AI-generated benefits investigation results will override them manually — reintroducing exactly the inconsistencies the AI was built to eliminate. Field reimbursement managers who weren’t part of the design process will maintain parallel manual prior auth workflows that quietly undermine the data quality the system depends on. Patient access managers who receive recommendations without context or explainability will disengage from the tool entirely.

The organizations that get this right treat data readiness and people readiness as simultaneous investments, not sequential ones. The change program informs what data the team will actually use. The data quality determines what decisions the AI can support. Build them together or pay for it later.

What I’d Build Today

If I were starting a HUB AI program from scratch, here is the order of operations — and it doesn’t begin in a workflow mapping session.

1. Audit the data layer before anything else. Map where patient data actually lives across every system that touches the case — HUB platform, manufacturer CRM, specialty pharmacy, payer portal, IVR, case manager notes. Identify what’s structured, what’s free text, what’s siloed, and what has no feedback loop back to the source. This audit takes weeks, not months. Skipping it costs years.

2. Design for workflow agnosticism from day one. Build AI that adapts to process changes, not AI that’s hard-coded to a specific intake workflow or PA submission path. HUB operations evolve constantly — new payer requirements, platform consolidations, manufacturer program redesigns. If your AI breaks every time a workflow changes, every operational update becomes a re-implementation project. The data layer is the stable foundation. The workflow layer should be interchangeable on top of it.

3. Invest in closed-loop data architecture. Every AI action should generate feedback that improves the next one. A prior authorization submission should close the loop on the approval or denial outcome — and that outcome should feed back into the model. A specialty pharmacy triage should record what happened downstream — enrollment, first fill, adherence at 90 days. A co-pay program referral should capture redemption. Deployments that close these loops get measurably better over time. Deployments that don’t, plateau.

4. Build the people program in parallel — not after go-live. Define how case managers, field reimbursement managers, and patient access teams will interact with AI outputs from the beginning of the design process, not the end. Build explainability into every AI recommendation so that the humans using it can understand, challenge, and trust it. Design feedback mechanisms that let frontline staff flag when the AI is wrong — because they will know before the model does, and their feedback is your most valuable training data.

The Organizations Getting This Right

They share a common pattern. They didn’t start with a vendor selection process or a workflow mapping exercise. They started with an honest assessment of their data — what they had, what was missing, and what it would take to make it AI-ready. They ran their change programs in parallel with their technology builds, not after them. And they designed their AI to be flexible enough to survive the inevitable operational changes that specialty pharma programs go through.

The result is AI that is workflow agnostic — because it doesn’t depend on any single process configuration to function. Data centric — because the quality of its outputs is only as good as the quality of what it’s given. And people led — because no AI in a HUB environment succeeds without the case managers, field teams, and patient access professionals who work with it every day being genuinely part of the design.

That’s the framework. It’s not complicated. But it requires doing the unglamorous work first — and most organizations still reach for the workflow map instead.

More on this topic at the Artha Consulting Lab Blog.

What does your data layer look like today? Drop a comment — I read every one.

— Ankur Jain, J.D., MBA | Healthcare Transformation | Founder, Artha Consulting Lab

#SpecialtyPharma #HUBServices #AIAdoption #DataStrategy #HealthcareAI #ChangeManagement #PatientAccess